The

kernel is a

computer program that is the core of a computer's

operating system, with complete control over everything in the system.

[1] It is the first program loaded on

start-up. It handles the rest of start-up as well as

input/output requests from

software, translating them into

data-processing instructions for the

central processing unit. It handles memory and

peripherals like keyboards, monitors, printers, and speakers.

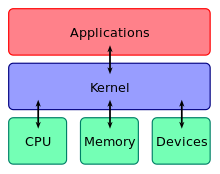

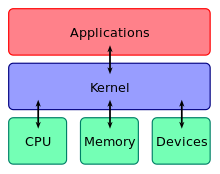

A kernel connects the

application software to the hardware of a computer.

The critical code of the kernel is usually loaded into a protected area of memory, which prevents it from being overwritten by

applications or other, more minor parts of the operating system. The kernel performs its tasks, such as running processes and handling interrupts, in

kernel space. In contrast, everything a user does is in

user space: writing text in a text editor, running programs in a

GUI, etc. This separation prevents user data and kernel data from interfering with each other and causing instability and slowness.

[1]

The kernel's

interface is a

low-level abstraction layer. When a

process makes requests of the kernel, it is called a

system call. Kernel designs differ in how they manage these system calls and

resources. A

monolithic kernel runs all the operating system

instructions in the same

address space, for speed. A

microkernel runs most processes in user space,

[2] for

modularity.

[3]

The kernel's primary function is to mediate access to the computer's resources, including:

[4]

The central processing unit

This central component of a computer system is responsible for

running or

executing programs. The kernel takes responsibility for deciding at any time which of the many running programs should be allocated to the processor or processors (each of which can usually run only one program at a time).

Random-access memory

Random-access memory is used to store both program instructions and data. Typically, both need to be present in memory in order for a program to execute. Often multiple programs will want access to memory, frequently demanding more memory than the computer has available. The kernel is responsible for deciding which memory each process can use, and determining what to do when not enough memory is available.

Input/output (I/O) devices

I/O devices include such peripherals as keyboards, mice, disk drives, printers, network adapters, and

display devices. The kernel allocates requests from applications to perform I/O to an appropriate device and provides convenient methods for using the device (typically abstracted to the point where the application does not need to know implementation details of the device).

Key aspects necessary in resource management are the definition of an execution domain (

address space) and the protection mechanism used to mediate the accesses to the resources within a domain.

[4]

Kernels also usually provide methods for

synchronization and communication between processes called

inter-process communication (IPC).

A kernel may implement these features itself, or rely on some of the processes it runs to provide the facilities to other processes, although in this case it must provide some means of IPC to allow processes to access the facilities provided by each other.

Finally, a kernel must provide running programs with a method to make requests to access these facilities.

The kernel has full access to the system's memory and must allow processes to safely access this memory as they require it. Often the first step in doing this is

virtual addressing, usually achieved by

paging and/or

segmentation. Virtual addressing allows the kernel to make a given physical address appear to be another address, the virtual address. Virtual address spaces may be different for different processes; the memory that one process accesses at a particular (virtual) address may be different memory from what another process accesses at the same address. This allows every program to behave as if it is the only one (apart from the kernel) running and thus prevents applications from crashing each other.

[5]

On many systems, a program's virtual address may refer to data which is not currently in memory. The layer of indirection provided by virtual addressing allows the operating system to use other data stores, like a

hard drive, to store what would otherwise have to remain in main memory (

RAM). As a result, operating systems can allow programs to use more memory than the system has physically available. When a program needs data which is not currently in RAM, the CPU signals to the kernel that this has happened, and the kernel responds by writing the contents of an inactive memory block to disk (if necessary) and replacing it with the data requested by the program. The program can then be resumed from the point where it was stopped. This scheme is generally known as

demand paging.

Virtual addressing also allows creation of virtual partitions of memory in two disjointed areas, one being reserved for the kernel (

kernel space) and the other for the applications (

user space). The applications are not permitted by the processor to address kernel memory, thus preventing an application from damaging the running kernel. This fundamental partition of memory space has contributed much to the current designs of actual general-purpose kernels and is almost universal in such systems, although some research kernels (e.g.

Singularity) take other approaches.

To perform useful functions, processes need access to the

peripherals connected to the computer, which are controlled by the kernel through

device drivers. A device driver is a computer program that enables the operating system to interact with a hardware device. It provides the operating system with information of how to control and communicate with a certain piece of hardware. The driver is an important and vital piece to a program application. The design goal of a driver is abstraction; the function of the driver is to translate the OS-mandated function calls (programming calls) into device-specific calls. In theory, the device should work correctly with the suitable driver. Device drivers are used for such things as video cards, sound cards, printers, scanners, modems, and LAN cards. The common levels of abstraction of device drivers are:

1. On the hardware side:

- Interfacing directly.

- Using a high level interface (Video BIOS).

- Using a lower-level device driver (file drivers using disk drivers).

- Simulating work with hardware, while doing something entirely different.

2. On the software side:

- Allowing the operating system direct access to hardware resources.

- Implementing only primitives.

- Implementing an interface for non-driver software (Example: TWAIN).

- Implementing a language, sometimes high-level (Example PostScript).

For example, to show the user something on the screen, an application would make a request to the kernel, which would forward the request to its display driver, which is then responsible for actually plotting the character/pixel.

[5]

A kernel must maintain a list of available devices. This list may be known in advance (e.g. on an embedded system where the kernel will be rewritten if the available hardware changes), configured by the user (typical on older PCs and on systems that are not designed for personal use) or detected by the operating system at run time (normally called

plug and play). In a plug and play system, a device manager first performs a scan on different

hardware buses, such as

Peripheral Component Interconnect (PCI) or

Universal Serial Bus (USB), to detect installed devices, then searches for the appropriate drivers.

As device management is a very

OS-specific topic, these drivers are handled differently by each kind of kernel design, but in every case, the kernel has to provide the

I/O to allow drivers to physically access their devices through some

port or memory location. Very important decisions have to be made when designing the device management system, as in some designs accesses may involve

context switches, making the operation very CPU-intensive and easily causing a significant performance overhead