Games, even as late as the PSP, often used custom encoding (usually described in table files for ROM hacking purposes).

As a kid you might have done something like A=1, B=2, C=3, D=4.....

Computers do similar things with hex. The most common traditional standard was ASCII (

https://www.asciitable.com/ ) though there are plenty of others. Japanese has a few as the 8 bit encoding (2^8=256 combinations before having to swap out, not enough for Japanese that has thousands of characters) of ASCII and most western computing did not work for them.

http://rikai.com/library/kanjitables/kanji_codes.sjis.shtml and eucjp

http://rikai.com/library/kanjitables/kanji_codes.euc.shtml being among the more noted there. This was a mess so unicode got invented (

https://www.joelonsoftware.com/2003...-about-unicode-and-character-sets-no-excuses/ ).

Games however often did their own thing, today it is more common to see the things noted above, but back when then custom was probably the default.

You get to figure it out. I cover a bunch of ways in my ROM hacking documentation (

https://gbatemp.net/threads/gbatemp-rom-hacking-documentation-project-new-2016-edition-out.73394/ ) but you might as well start with relative search (while I said custom above then most of the time devs would still do the A=1, B=2... routine but with a different starting number)

A program called monkey moore

https://www.romhacking.net/utilities/513/ being my usual go have a look here thing for that. Find a section of text in the game, preferably without any fancy characters, capitals (while ASCII noted above has a neat encoding trick where you add or subtract you don't necessarily know it is the case here), placeholders, new lines or similar, search for that in the program and it might well unlock 90% of the table right there and then (numbers, punctuation, bold/italic/underlined text/formatting, and odd characters might still have to be found). As a quick test I might even skip the finding something in the game, assume the phrase " the " without quotes will exist somewhere in the text and search for that. Does not work so well on Japanese as there is no official order of characters like alphabets but you do still have some options there.

If there is compression involved (did not look like it from that hex shot) it will possibly mess this up, and just because most English intended encodings are relative encodings does not mean they all are.

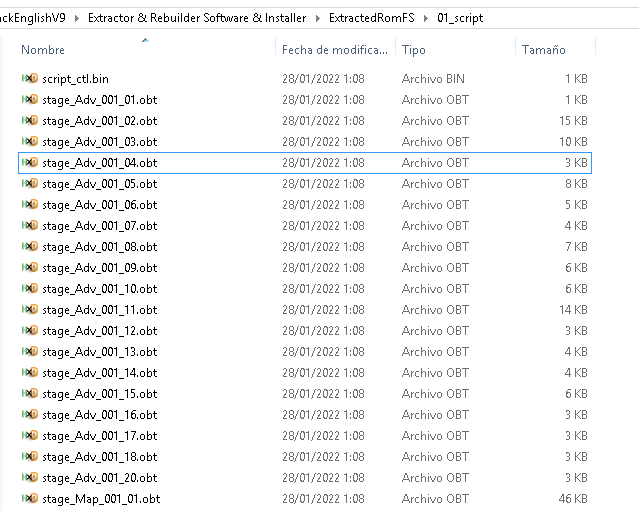

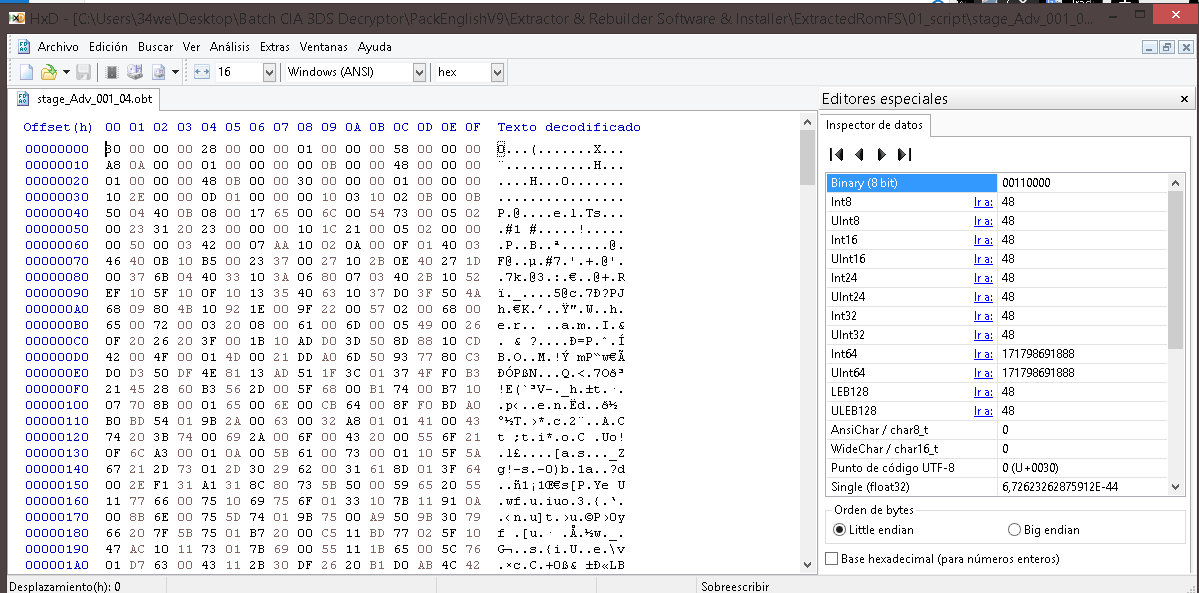

I will also note the start of files of text, and many file formats in general, are pointers (something I usually liken to the contents page of a book) so your hex shots up there might be those. Indeed do a byte flip on those to sort endianess and it mostly looks like numbers counting up which is usually a telltale sign of such things (text being rather more random* where you tend to want to go further and further into the text/file the further you go down through pointers**, though not always as you might need to go somewhere else).

*though not really random as my documents will cover various aspects of statistical analysis to derive encodings/tables.

**indeed do the flip, find the end of sentences/sections in the file (might not be directly at what the numbers say) and compare the differences in location. You will want to be doing this anyway or figuring out pointers as pointers are what allow you to change the length of text sections rather than having to match or go smaller. Back to the contents page analogy then thing what would happen to someone counting pages along if you stuffed a bunch more in the middle of the book, and ripped some out from elsewhere.