"Thank you!" for @Alexander1970 and @Blauhasenpopo for their patience in previous profile messages about this.

Pinging @IC_ … because you gave some " " in profiles and finally

" in profiles and finally

Pinging @Nikokaro … because I won't give up trying to convince you in creating proper backup (or just any backup for starters) .

In my search for backup methods resistant against as many threats as possible I decided to systematically copy my data to BD-R. Previously I used CD/DVD/BD only in an unsystematic way for additionally securing few selected files. The most important stuff.

Now I want to create a backup of 2 to 4TB all on Blu Ray discs.

After already been lectured in my status that my method – to condense it into blunt single word form – sucks, I’ll explain what I did and why in more detail (for nobody wanting to read this).

For the first test I used only a small subset of my data. Collection of audio books/audio drama of 346GB. Taking away file system overhead and some spare memory (and some other data on the last disc) this meant using 18 BD-R each one carrying a 20GB Veracrypt container and augmented ECC data by dvdisaster.

It was a lot of work:

That was the backup task. But a backup is only useful when it can be restored. Any sensible backup must be followed by testing the restore procedure.

Conclusion:

A f…cking load of work compared to just rsync -av from one HDD/SSD to another. But this backup of static data to non-erasable, non-rewritable media was overdue.

Make fun of me if you want. One thing is clear: This additional copy will provide resilient fallback in case of complete loss of all easy accessible copies. The off-site problem is currently still unsolved because I have no friends and would not trust anybody anyway… but:

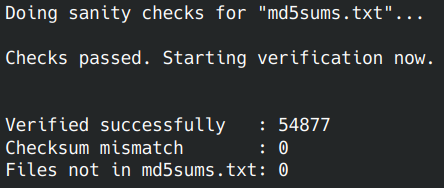

Seeing the following after all this work was very satisfactory:

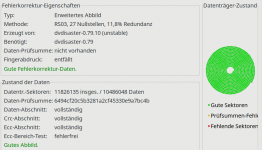

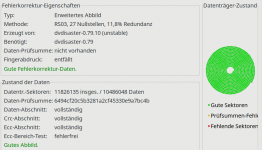

Equally satisfactory:

(Sorry: This application just took German language from system settings. The green text says: "Good error correction data" and "Good image")

Nice side effect is the ease of backing up the created containers to online storage now (once I decide which one to pay for). Uploading few giant files (dimension of 500GB or even more) is not feasible with mediocre connection; hard to handle and a hassle if data has to be replaced. Uploading decrypted data is not an option and file-by-file based encryption stuff like EncFS is said to have known weaknesses in case of changes to data → multiple versions (which could happen accidentally before uploading new files)

Using 3-layer (≈10¹¹ bytes) or 4-layer BDXL(≈1.28*10¹¹ bytes) would greatly decrease the effort, but the media are outrageously expensive: ≈190€ per TB for M-DISC

Pinging @IC_ … because you gave some "

" in profiles and finally

" in profiles and finallyPinging @Nikokaro … because I won't give up trying to convince you in creating proper backup (or just any backup for starters) .

In my search for backup methods resistant against as many threats as possible I decided to systematically copy my data to BD-R. Previously I used CD/DVD/BD only in an unsystematic way for additionally securing few selected files. The most important stuff.

Now I want to create a backup of 2 to 4TB all on Blu Ray discs.

After already been lectured in my status that my method – to condense it into blunt single word form – sucks, I’ll explain what I did and why in more detail (for nobody wanting to read this).

For the amount of data nowadays BD-R, especially the cost effective single layer ones, are small media.

Optical discs are prone to scratches. Parts might become unreadable because of a small carelessness.

Standard UDF file system has limitations compared to common Linux file systems.

There is no integrity test in user space.

Automatically splitting the data becomes necessary. A helpful script can be found as part of genisoimage package. This nice script – dirsplit – allows dividing a given folder into chunks/volumes of predefined size. Resulting volumes get filled in a smart manner so that only a small amount of space gets wasted.

Optical discs are prone to scratches. Parts might become unreadable because of a small carelessness.

Additional error correction (ECC) should be applied to guard against this and the infamous “rot” associated with optical discs.

Standard UDF file system has limitations compared to common Linux file systems.

A file functioning as container for a Linux file system is desirable. And I want the data securly encrypted. Veracrypt should suffice. The people behind this awesome piece of software seem to be even more paranoid than me.

There is no integrity test in user space.

Drives do their EDC/ECC stuff on their own but ultimately we have to believe them reproducing the correct data (which isn’t always the case due to physics of the analog parts/signal processing → off-topic). Well. Other media don’t offer integrity verification as well so not really a particular downside of discs.

Wanted to include MD5 checksum verification. MD5 is fast, easy and good enough (before anybody lectures me again). No need for any super secure hash algo here. Arbitrary corruption not leading to checksum mismatch is HIGHLY unlikely.

For the first test I used only a small subset of my data. Collection of audio books/audio drama of 346GB. Taking away file system overhead and some spare memory (and some other data on the last disc) this meant using 18 BD-R each one carrying a 20GB Veracrypt container and augmented ECC data by dvdisaster.

It was a lot of work:

- Preparing text file with MD5 checksums

- dirsplit

- Creating 20 different containers (believe me, this is tedious with Veracrypt)

- Little for loop (see spoiler below) distributing the given volume folders into the mounted containers

- Create ISO images with Imgburn, each one containing only one container file

- Inflate the ISO images with dvdisaster to single layer BD-R size

- Create additional file based level of ECC on 2 extra BD-R from other manufacturer than the main backup

- Burn each image to a BD-R with Imgburn, verify enabled

- Print nice label on each disc

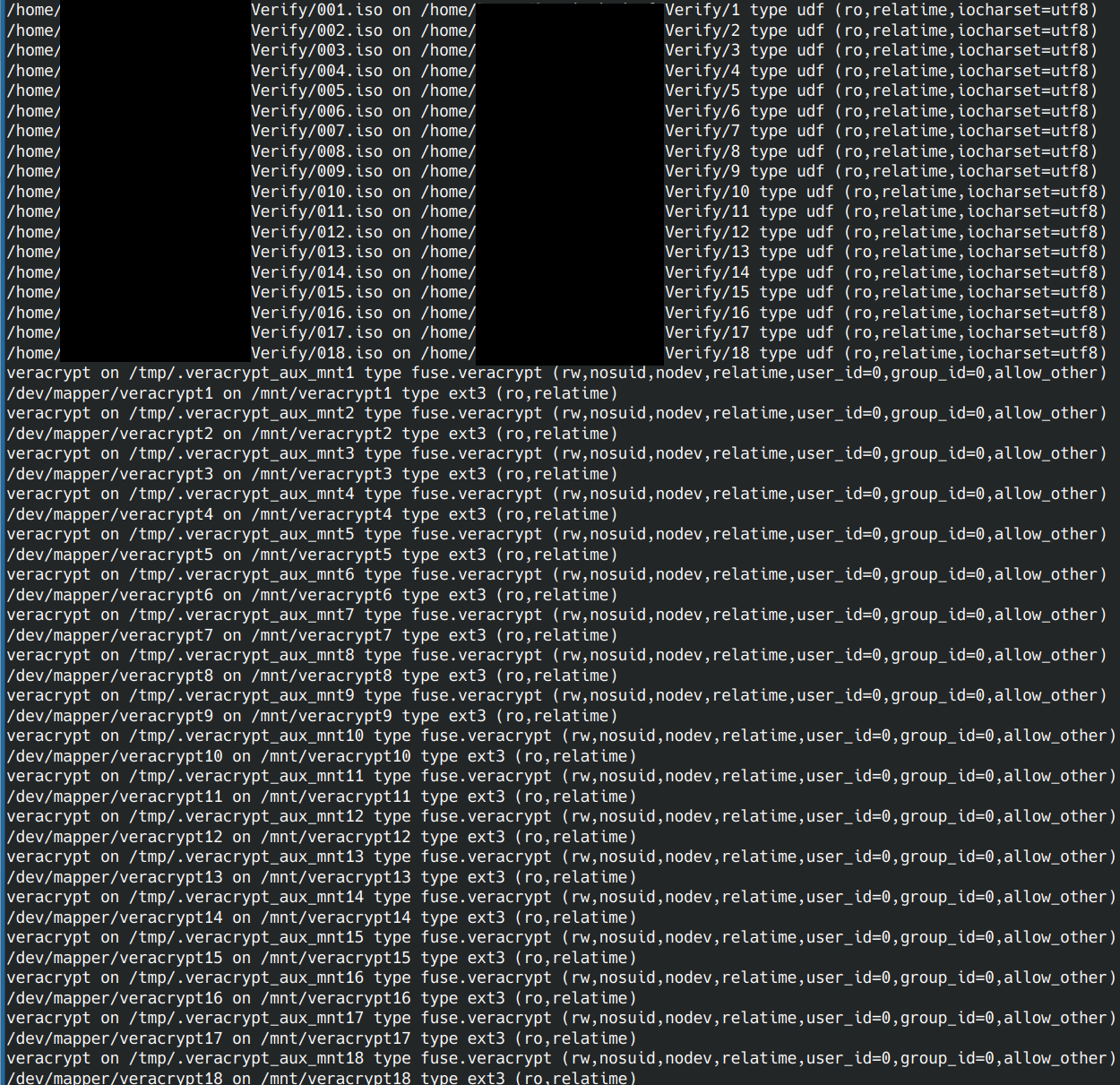

for i in {1..18}; do

rsync -av "/home/Daten/vol_$i/" "/mnt/veracrypt$i"

doneThat was the backup task. But a backup is only useful when it can be restored. Any sensible backup must be followed by testing the restore procedure.

- Dump each BD-R on second computer

- Check each image with dvdisaster (good image, good ECC)

- Mount all images (boy, this sucks, see spoiler below)

- Merge all data into empty folder with similar for loop

- Verify checksums

Conclusion:

A f…cking load of work compared to just rsync -av from one HDD/SSD to another. But this backup of static data to non-erasable, non-rewritable media was overdue.

Make fun of me if you want. One thing is clear: This additional copy will provide resilient fallback in case of complete loss of all easy accessible copies. The off-site problem is currently still unsolved because I have no friends and would not trust anybody anyway… but:

Seeing the following after all this work was very satisfactory:

Equally satisfactory:

(Sorry: This application just took German language from system settings. The green text says: "Good error correction data" and "Good image")

Nice side effect is the ease of backing up the created containers to online storage now (once I decide which one to pay for). Uploading few giant files (dimension of 500GB or even more) is not feasible with mediocre connection; hard to handle and a hassle if data has to be replaced. Uploading decrypted data is not an option and file-by-file based encryption stuff like EncFS is said to have known weaknesses in case of changes to data → multiple versions (which could happen accidentally before uploading new files)

Using 3-layer (≈10¹¹ bytes) or 4-layer BDXL(≈1.28*10¹¹ bytes) would greatly decrease the effort, but the media are outrageously expensive: ≈190€ per TB for M-DISC